Bare metal servers are often associated with better performance and stronger isolation, but those advantages come less from raw hardware specifications and more from how workloads interact with dedicated resources. When applications run directly on physical infrastructure without shared scheduling layers, system behavior becomes easier to predict and easier to control.

Understanding this difference requires looking beyond marketing claims.

Bare metal environments behave differently because they remove abstraction layers that normally sit between workloads and hardware. Performance and isolation are closely linked in this model, since both depend on the same underlying principle: exclusive access to system resources.

What Makes Bare Metal Performance Different?

Bare metal performance differs from virtualized environments because workloads interact directly with physical hardware rather than through multiple layers of resource abstraction. This changes how compute resources are scheduled, how memory is accessed, and how storage and network operations are handled under load.

Direct Hardware Access

In a bare metal environment, applications run directly on the operating system installed on the physical server. There is no default hypervisor scheduling workloads across shared infrastructure, which means fewer layers exist between software and hardware.

Removing these layers reduces the amount of work required to translate application requests into hardware operations. CPU instructions, memory access, and storage requests reach the hardware more directly. While the difference may be small for lightweight workloads, it becomes more noticeable when systems process large volumes of transactions or sustained computational tasks.

CPU and Memory Behavior

Processor scheduling behaves differently on bare metal because compute resources are not shared across multiple tenants. Applications have dedicated access to the server’s CPU cores and system memory, eliminating the need for the hypervisor to distribute processing time among competing workloads.

This leads to more consistent compute performance. Tasks do not experience delays caused by scheduling conflicts with neighboring virtual machines, and memory access patterns remain stable because RAM is not overcommitted or dynamically reassigned between tenants.

The result is not necessarily higher peak performance, but a system that behaves more predictably when workloads increase.

Storage and Network Stability

Storage and network operations also benefit from dedicated resource paths. In shared environments, multiple workloads may send disk or network requests through the same virtualized infrastructure layers, which can introduce contention and latency variation.

Bare metal servers remove many of these shared layers. Storage devices connect directly to the operating system, and network interfaces handle traffic for a single environment rather than multiple tenants. This often produces more stable throughput and more consistent latency when systems operate under sustained load.

For applications that rely on steady I/O performance, this stability can be as important as raw speed.

Why Isolation Shapes Bare Metal Performance

Performance improvements on bare metal do not come only from direct hardware access. They also come from how infrastructure isolates workloads from one another.

When resources are shared across multiple tenants, performance can vary depending on how those resources are allocated and scheduled. Bare metal avoids many of these situations by dedicating the entire server to a single environment.

The Noisy Neighbor Problem

In shared environments, infrastructure resources are distributed across many virtual machines or workloads. Even when each system is allocated a defined amount of CPU, memory, or storage throughput, those resources still originate from the same physical hardware.

When another tenant suddenly increases its workload, the underlying hardware may need to rebalance resource usage. Storage queues can grow longer, network buffers can become congested, and CPU scheduling may prioritize different tasks. These situations are often referred to as the “noisy neighbor” problem, where one workload indirectly affects the performance of another.

Because bare metal servers run only a single tenant environment, this type of cross-tenant interference does not occur. The workload operating on the server controls the full capacity of the underlying hardware.

Physical Isolation vs Logical Isolation

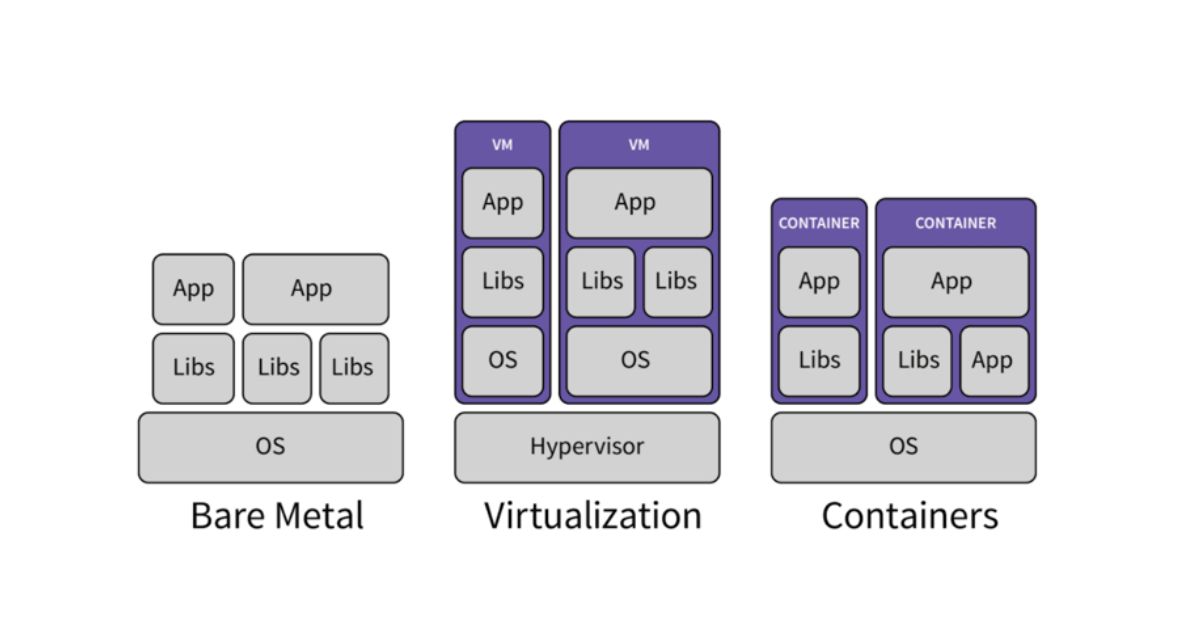

Virtualized environments typically rely on logical isolation, where the hypervisor separates workloads into independent virtual machines. This approach is effective for managing multiple tenants on the same hardware, but it still requires a shared infrastructure layer to coordinate resource access.

Bare metal servers provide physical isolation instead. The entire hardware system, from CPU, memory, storage devices, to network interfaces, belongs to one operating environment. Resource scheduling occurs within the operating system itself rather than through a hypervisor distributing resources across multiple tenants.

This difference simplifies performance behavior because fewer components participate in resource allocation.

Isolation Beyond Speed

Isolation is not only about achieving higher performance. In many cases, it matters because it produces consistent system behavior over time.

When infrastructure variables are limited to a single environment, performance testing becomes easier to reproduce. Capacity planning becomes more reliable because workload behavior does not depend on unknown neighboring tenants. Operational teams can observe how systems behave under load without accounting for external interference.

For workloads that operate continuously or process large volumes of transactions, this level of consistency often becomes the most valuable advantage of bare metal infrastructure.

Why Bare Metal Performance Is Often More Predictable

The performance characteristics described earlier lead to one practical outcome: predictability.

When workloads run on dedicated hardware with fewer shared infrastructure layers, system behavior becomes easier to anticipate under different operating conditions.

Shared environments introduce more variables. Resource scheduling decisions, hypervisor-level balancing, and competing workloads may introduce small fluctuations that accumulate during periods of heavy activity. These variations do not necessarily indicate system failure, but they can make performance behavior harder to model.

Bare metal removes many of these variables. A single environment controls CPU, memory, storage, and networking resources. As a result, performance changes usually reflect the workload itself rather than external infrastructure activity. Engineers can interpret test results more clearly and plan capacity with greater confidence.

At HostScore, this predictability is one of the factors we consider when evaluating dedicated / bare metal infrastructure providers. Platforms such as Atlantic.Net, for example, allocate single-tenant bare metal resources that emphasize consistent hardware ownership (more details here). When applications run under sustained load, exclusive hardware access helps maintain stable behavior across both testing and production environments.

Bare metal infrastructure therefore behaves more consistently as demand grows. That consistency makes performance easier to measure, understand, and maintain over time.

Where Performance and Isolation Matter Most

The predictability described above becomes most noticeable when systems operate under sustained load or process large volumes of data. In these environments, small variations in resource availability can compound quickly, affecting response times, throughput, or system stability. Bare metal infrastructure reduces those variables by ensuring that the workload controls the full capacity of the server.

Database Systems

Large relational or analytics databases rely heavily on consistent CPU scheduling, memory access, and disk throughput. When those resources fluctuate, query performance can become unpredictable. Running these systems on single-tenant hardware helps maintain stable execution patterns and more reliable query timing.

Latency-Sensitive Applications

Services that depend on real-time processing, such as financial transaction systems, streaming pipelines, or high-frequency data processing, often require consistent network and compute performance. Eliminating shared infrastructure layers helps reduce the variability that can appear in virtualized environments.

High-throughput Workloads

Systems that process large datasets, perform continuous analytics, or run long-duration compute jobs tend to behave more predictably when they operate on infrastructure that remains dedicated to the workload over time.

In each of these cases, the advantage of bare metal is not simply raw speed. It is the ability for infrastructure to behave consistently as demand increases, allowing engineers to understand system limits and plan capacity with greater confidence.

Final Takeaway: Performance and Isolation Depend on Resource Ownership

Bare metal performance advantages come from one core principle: resource ownership. When applications run directly on dedicated hardware, they avoid many of the shared scheduling layers and competing workloads that can influence performance in virtualized environments. CPU cycles, memory access, storage I/O, and network capacity remain controlled by a single system rather than distributed across multiple tenants.

This ownership also strengthens isolation. With no neighboring workloads competing for the same infrastructure, performance behavior becomes easier to predict and easier to reproduce across testing and production environments. For teams operating latency-sensitive systems, large databases, or high-throughput processing workloads, that consistency often matters more than peak benchmark numbers.

For readers evaluating platforms that implement these principles effectively, HostScore maintains a regularly updated Best Bare Metal Hosting list highlighting providers that deliver dedicated hardware performance alongside reliable infrastructure and operational transparency.